8 Unit :8 :Implementing Learning Analytics

Welcome to Unit 8. While student data have long been collected and used, there is additional work associated with the widespread implementation of learning analytics. There is a need to understand who the stakeholders are, to identify the systems and processes needed to implement learning analytics, and to then design and operationalise responsive and integrated systems and processes. Together in this unit, we explore the dimensions that will put you one step further into applying learning analytics for your course or institution!

Learning outcomes

After you have worked through this unit, you should be able to:

- conduct a SWOT analysis of your local institution/course context for the implementation of learning analytics

- explore various open applications for implementing learning analytics in your own (course) context

- compile a list of stakeholders that you would invite to an information session on the potential to implement learning analytics, or to further improve and increase the impact of learning analytics

In Unit 7, you were asked to think of who the stakeholders in learning analytics might be. You may have thought of who contributes to the model of learning analytics in your class, or within the wider institution. We’d guess that you thought of two key players at least: learners and teachers. But there are many others who routinely engage with learning analytics. In fact, stakeholders will differ, depending on the context, e.g., higher education and primary schools. Yet common key players take on similar roles.

According to two studies (Drachsler & Greller, 2012; Leitner et al., 2017), four common stakeholders appear repeatedly when implementing learning analytics, regardless of the context: learners, teachers/tutors, researchers/administrative staff, and the institution itself. Other stakeholders that might also be involved include course designers, data keepers, governments, parents, senior leaders, teaching assistants, quality assurance, information technology staff, marketing staff, and privacy and ethics governors.

One helpful approach to identifying your key stakeholders is to follow the questions within the SHEILA framework.[31] We will refer to the section covering stakeholders next and dig deeper into SHEILA in the following section.

[30] https://sheilaproject.eu/wp-content/uploads/2018/08/SHEILA-framework_Version-2.pdf

Case study on identifying stakeholders in learning analytics

Laila is a learning analytics expert who has started to work at a local school. The senior management team asked Laila to start planning for implementing learning analytics at their school, since learning and teaching processes have been rapidly moved online. The school’s major objectives are to provide performance metrics to parents and to intervene when learners appear to fall within a danger zone of failing in classes. Laila wants to identify the key people she needs to talk to so that the implementation of learning analytics at the school is successful and achieves the proposed objectives. She uses the SHEILA framework to identify the personnel as follows:

Identify primary users of learning analytics

Laila selects learners and teachers as the main stakeholders for her approach.

Identify senior management

There are many levels of management at the school, including the head teacher, deputy principal, assistant principals (curriculum for learning, standards for learning), school managers, and a digital learning advisor. Laila plans to talk to each to see where their decision-making responsibilities and insight will support her implementation.

Identify professional team

She selects the IT support, student welfare and support staff, and a key member of the school office team to support her here.

Select academic team

Since the context of the learning analytics in the school is computer- based, she involves the rapidly formed digital-learning group and the department leads for each curriculum area.

Identify external parties

Laila decides that the parents of the learners are fundamental, given that they have the legal guardianship of the learners involved and will be recipients of some of the metrics.

Identify required expertise

Laila singles out other expertise needed. She wants to involve didactics expertise, IT expertise, learning analytics expertise and statistics expertise to help the professional team in carrying out the implementation.

After spending four months working on this project, Laila was able to identify the major parties needed to achieve the school’s goals of implementing learning analytics. Selecting the right stakeholders was a great start in helping Laila and the school support learners and parents.

SHEILA is a project that supports European higher education institutions in managing and using their digital learner data. By conducting a broad range of in-depth interviews and surveys with learning analytics stakeholders, teachers and learners, the project developed a framework to assist higher education institutions to develop, implement and evaluate their uses of learning analytics. The SHEILA framework provides a sound foundation on which you can base the various stages of your own learning analytics approach.

To support you in this, we summarise the framework and highlight the major components.[32] figure “SHEILA framework dimensions and components” illustrates the six dimensions of the SHEILA framework. Each dimension also includes a relevant action, challenge and prompts for use in your future policy development.

[30] https://sheilaproject.eu/sheila-framework/create-your-framework/

Dimension 1 – Map political context:

The first dimension asks you to identify the internal and external drivers for learning analytics:

- Action: Think of the political context and identify opportunities.

- Challenges: What are the challenges that may exist in the political context?

- Policy: Think about reasons for adopting learning analytics and how your institutional objectives line up with those reasons.

Dimension 2 – Identify stakeholders:

In the second dimension, you need to identify key stakeholders. We have touched on this already in Unit 7 and revisited it briefly in section “Learning analytics stakeholders” of this unit.

- Action: Think of the key personnel to implement the learninganalytics model.

- Challenges: What are the challenges of involving some of the stakeholders? Are there any risks if you want to involve learners?

- Policy: Think about how you will get consent. (Hint: re-read Unit 7).

Dimension 3 – Desired behaviour changes:

Identify the changes to your institution, course or stakeholders that might arise when implementing learning analytics.

- Action: Think of the changes that could occur when learning analytics is implemented.

- Challenges: Think about ethics and privacy, infrastructure, capabilities, management, etc.

- Policy: How might you communicate with relevant actors about the changes?

Dimension 4 – Develop engagement strategy:

- Action: What would you need to carry out the changes in dimension 3? Think of who to appoint. Does your project need funding? Think about resources for that!

- Challenges: What are the challenges that will hinder your development of the engagement strategy?

- Policy: What type(s) of data do you collect, analyse and report?

(Hint: see Unit 4.)

Dimension 5 – Analysis of the internal capacity to effect change:

How ready is your institution to change based on dimensions 3 and 4?

- Action: Think about evaluating your institution/course infrastructure to handle any changes. Would this need training? Would there be any cultural issues to consider?

- Challenges: Think about human capital and infrastructure. What are the challenges that might slow or prevent the planned changes?

- Policy: What changes might be needed to your institutional policies?

Dimension 6 – Monitoring and learning frameworks:

Establish indicators and measures for a successful implementation.

- Action: List indicators and measurable metrics.

- Challenges: Fail to recognise and address limitations of data and analytics models.

- Policy: Identify the limitations of learning analytics in your institution — i.e., what can be done and what not?

At this stage, we have covered a lot. You have learnt about data-driven approaches in education; the evidence of data; the difference between learning analytics and other derivations; uses of learning analytics; tools; frameworks; and ethical and privacy concerns. Since this unit focuses on implementing learning analytics, we would like you to carry out a short activity to help you prepare. On your own device (or even using a pen and paper!), identify the strengths, weaknesses, opportunities and threats related to your course or institution when implementing learning analytics. Use the matrix below to guide your thoughts.

Have you ever wondered about how learning analytics work with educational data? Do you want to try some off-the-shelf tools? Then welcome to this part of the course! Unit 6 has already highlighted a number of available tools, but here we provide another that you can quickly experiment with.

EdX Logfile Analysis Tool (ELAT)

As you will now know, learning analytics is concerned with small and big data. Sources of data vary in size and level. We would like to provide you with an example from the world of MOOCs learning platforms. As their name indicates, MOOCs can have lots of students, content and data!

Activity test a web-based tool

The Technical University of Delft, in the Netherlands, has developed a tool (ELAT[33]) that analyses data from the edX[34] MOOC platform (see Figure “ELAT snapshots”). You do not need advanced skills to use ELAT, nor do you need data! This tool is browser based and provides a sample data set for you to play with. We are delighted to share it with you. Access the tool via https://mvallet91.github.io/ELAT-Workbench/, where you are offered a range of descriptive statistics and advanced functionalities, including machine learning and static and interactive visualisations. Check out the tool and navigate around. Use the functions of ELAT, and read the quick guide in case you need further help.

[33] https://mvallet91.github.io/ELAT-Workbench/

[34] http://edx.org

OnTask

In this section, we share another principal case study of learning analytics implementation. OnTask[35] is an open-source tool developed by several Australian universities and funded by the Office of Learning and Teaching of the Australian Government. OnTask aims to improve the learning experience by providing learners with timely, personalised and actionable feedback throughout their course. The software can be downloaded and used on your local machine. There is also a discussion forum available for inquiries if you need help. See Figure “OnTask screenshot” for a screenshot of the tool.

So how can you use OnTask? Here’s one example of how it might help:

Scenario:

You are a teacher who wants to send customised messages by e-mail to your learners based on specific conditions. You create three customised messages for your learners who

a) have not introduced themselves to their peers,

b) have not submitted their assignments on time or

c) have not watched at least 75% of the course

OnTask provides “if-this-then-that rules” that you can use for those cases. In very simple terms, you have the following options here:

[35] https://www.ontasklearning.org/

- For case (a), you program the tool to send “Dear student x. You’ll find it helpful to discuss course issues in the forum. As soon as you can, try to make sure you introduce yourself to your peers.”

- For case (b), you program the tool to send a message stating: “Well done for getting your assignment in, although you missed the course deadline. Please aim to submit the next assignment of course x on time. If you are having problems keeping up, please do contact me.”

- For case (c), you will want to use three conditions — let’s say: if the learner fails to watch any videos, or views up to 50%, or watches at least 75%. You teach the tool to send a tailored message: If a learner never watches the videos, then “Dear student x. In order for you to answer the self-assessment questions, it is really important that you watch the course x videos”; if student x watches up to 50%, then, “Student x, keep up the good work! There are still more videos for you to watch.” The final condition could trigger, “Student x, I’ve noticed you have watched most of the course videos. Great work — keep going!”

[36] https://www.ontasklearning.org/wp-content/uploads/OnTask-Brief-Description-v1.pdf

Analytics for teaching

The path to good learning starts with good teaching. In this example, we highlight how learning analytics can be used for documenting teaching and learning through dashboard designs. An initiative from Utah State University [37] shows designs of visualisations and analytics that could be used to review and improve learning, teaching and course designs (Figure “Two dashboard views of analytics from Utah State University” provides examples of two dashboard views).

[37] https://cidi.usu.edu/Analytics

Two dashboard views of analytics from Utah State University.

The top figure shows the relationship between learners’ final grade and their course views. The bottom figure shows teacher interactions with the content and learners over time.5

A teacher engaging with the first dashboard view above may use the links between student engagement with different aspects of the course and the final grade distribution. They might then reflect and opt to restructure certain components, by editing content, say, or by leaving messages on less-viewed pages.

The second dashboard view provides the teacher with a timeline of his/her interactions with the learners in the LMS, including discussion forums, comments and announcements. They can quickly see where they are spending most time at various stages and where further support or signposting might be needed.

For another example and more insights into learning analytics implementation, watch this short video.

Watch Video: https://youtu.be/KGe82vXjsIQ

Video attribution: “Unit 8: Learning Analytics in Action” by Commonwealth of Learning is available under CC BY-SA 4.0.

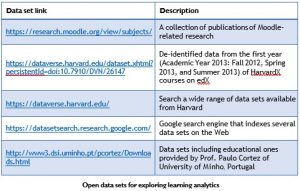

Do you want to try learning analytics, but you lack data? We know that getting your hands on robust data sets is not easy. However, there is a movement to share data, which is similar to the open educational resources (OER) and open research movements. The table below lists some sources of open data sets that you may wish to explore when playing with learning analytics approaches and tools:

Toward the end of this unit, we covered important aspects of implementing learning analytics. We discussed the advantages of considering stakeholders and shared a case study to illustrate how this might be done. The SHEILA framework was introduced, providing you with a clear and comprehensive framework. We also provided three examples illustrating tools and approaches implemented at educational institutions in different countries (the Netherlands, Australia and the US). Finally, we have pointed you toward a set of free and open educational data resources that you may use to try learning analytics! Thank you, and good luck with Unit 9: Developing Policy for Learning Analytics. You are almost there!

a. Researchers are identified as important stakeholders for learning analytics when their roles involve evaluating a learning analytics system.

i. True

ii. False

b. To improve the learning experience at one institution, the learning analytics expert decides to depend largely on interviewing learners to design an effective learning analytics model.

i. True

ii. False

c. Data surveillance is one of many challenges facing learning analytics implementations.

i. True

ii. False

d. According to the SHEILA framework, identifying stakeholders is

i. the first step you should do when implementing learning analytics.

ii. where you can identify areas that might be supported by learning analytics.

iii. where you explore who will be responsible for data controlling.

iv. where you can seek funding for your learning analytics project.

e. Despite data sets being a key corporate asset, they are generally not given the importance they deserve, in terms of making them easy to find and accessible.

i. True

ii. False

f. Which of the following is an example of the third dimension of the SHEILA framework (desired behaviour changes)?

i. Consult relevant policies and codes of practice to implement learning analytics.

ii. Choose analytical models and define measurements of success for your learning analytics approach.

iii. Identify drivers for learning analytics and the objectives behind its implementation.

iv. Adjust the teaching method to fit the implementation of learning analytics

g. Involving IT staff in your learning analytics implementation is an example of bringing in the:

i. professional team stakeholder.

ii. academic team stakeholder.

iii. external party stakeholder.

iv. professional and external stakeholders.

h. There are several available frameworks to support the regulation of learning analytics within a policy context.

i. True

ii. False

i. There are many learning analytics applications that only function on a single digital/computer-based learning platform.

i True

ii. False

j. You are asked to map the political context of an existing learning analytics system that seems to be suffering from data surveillance issues. Which of the following would you prioritise?

i. Identify the stakeholders that can help you mitigate the issue.

ii. Contact the IT team and stop the system completely.

iii. Study the reasons and identify the drivers and the institution’s strategy to mitigate the problem.

iv. All of the above.